This is part 2 of my future phone 2025 vision article, for part 1, check out the post here. This part details out the features that could be in Phone 2025!

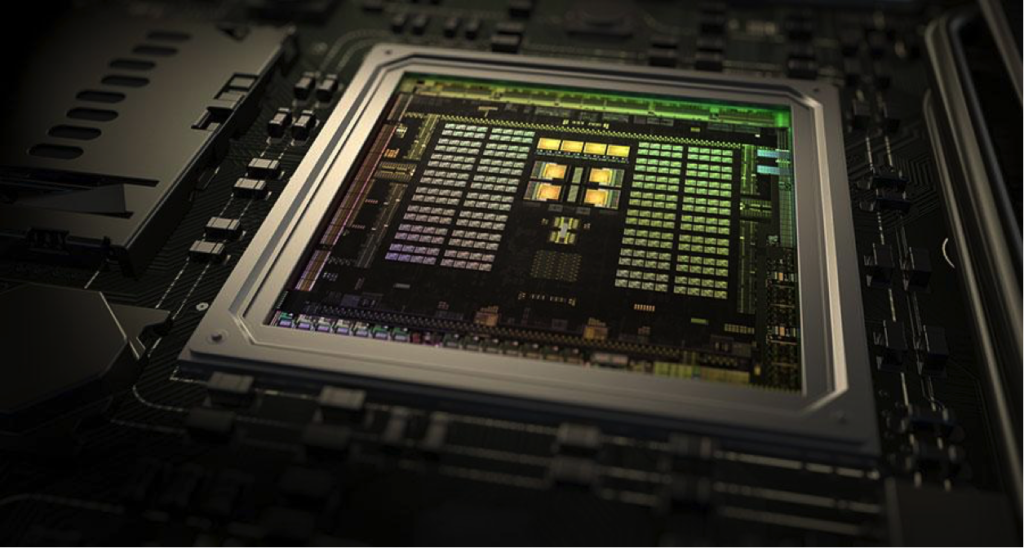

Neuromorphic processor with an “AI-core”

Existing smartphones have been demonstrated to digitize documents, translate signs, drive a car, solve a Rubik’s cube, and the 2025 phone will become a butler, providing information that you didn’t know you needed, giving answers and solutions as you command it, learning your habits, nuances and behaviors to essentially offset human weaknesses.

For that to happen, the processor needs to be powerful – as powerful as a human brain, but without its caveats, such as forgetfulness. The processor will be a multi-SoC (system on chip) and will have the standard CPU-GPU cores, but with a Vision Processing Unit (VPU) and a neuromorphic core or Neural Processing Unit (NPU). This CPU-GPU-VPU-NPU processor will pave the way for Artificial intelligence (AI) of the future.

For the sake of simplicity, I call this neuromorphic processor an Artificially Intelligent Neural Processing Unit (AI-NPU). With machine-learning algorithms and neural-network (NN) circuitry, this AI-NPU core will feature deep-learning capability and the smartphone will learn to anticipate what I want to do next, my schedules, habits, desires and needs in a more human-like manner than the semantic feedback we have today.

A neuromorphic core is a processor modeled after the human brain, designed to process sensory data such as images and sound and respond to changes in that data in ways not specifically programmed. A learning and constantly evolving core computing architecture is tremendously efficient as it finds new and better ways to process a task. It’s like learning how to ride a bicycle. Despite the complexity of the activity, after a few tries, the task becomes ingrained and effortless, and the brain now automatically maintains balance and speed to keep a bicycle in motion.

With human-like anticipation and realism, you will not be able to tell the difference between your phone and a person. By learning texting habits, the phone will be able to respond to messages by itself, like having a bot to reply to those tedious chats. The new processor will make Bixby, Alexa, Siri and Cortana jealous.

This year’s mobile phones are on 7nm wafer processors which are already blurring the lines between desktop-grade CPUs and mobile CPUs. Qualcomm’s new Snapdragon 1000 chip is designed to compete with x86 chips and Nvidia’s GPU systems are marketed for AI applications.

Current leaders in mobile processors marketed with purported “AI capability” include MediaTek’s new Helio P90 and Qualcomm’s Snapdragon 855. Beyond 2019, major chip manufacturer TSMC has announced that its 5nm wafer fabrication process is ready for production for the next generation of processors, and Intel has announced its new Foveros 3D chip stacking technology.

The semiconductor industry has been pretty consistent in its projected advancements, with major players investing billions of dollars in R&D, and I expect to see a powerful CPU shrink down in size to fit my smartphone in 2025.

Computing Desktop environment

With all that processing power in a phone, do we really need a laptop or tablet for everyday computing tasks? The future phone will become your future laptop or desktop with a simple dock.

The idea is not new. Since 2012 Asus has had a product line, the PadFone, where its smartphone could be docked into a tablet – increasing the screen real estate and battery life of the phone.

This desktop functionality concept was recently updated by Razer’s Linda, Microsoft’s Continuum and Samsung’s DeX. Linda turns a smartphone into a trackpad that docks into a laptop body, whilst Dex is a dock for a phone which creates a familiar desktop computing environment. This desktop PC feature will be mainstream in future phones just by plugging a reversible USB Type-C port into the phone for both graphics and power. Examples today include Continuum and DeX, which can run from the company’s flagship phones. You’d be surprised how something so simple still isn’t intuitive enough today.

Memory capacity could come from ultrafast Intel’s Optane, comprising of Micron’s 3D XPoint memory, while as of 2019 Samsung’s embedded Universal Flash Storage (eUFS) memory offers memory of up to 1Tb.

I envision that in 2025, we will all be carrying our PC in our pocket, looking for USB-C ports to plug our phones into so we can display our own instant-on PC at work, a friend’s home, or just about anywhere. I’ll wake up, undock my phone from its wireless-charging cradle and, when I reach work, I’ll just dock my phone into the cradle at my desk. There is would be no need for a dedicated computer at work or at home. All files are stored on various cloud services (Dropbox, Google Drive, Onedrive), while persistent files are stored in the phone’s 16TB of storage.

A home, or in the office, projectors and screens receive wireless display commands from the phone that are compatible with existing wireless display standards such as Apple’s AirPlay, Miracast, Intel Wireless Display (WiDi) and DLNA. As a computing desktop, our 2025 phone will push or stream a desktop screen to any TV, projector or screen that is compatible.

You’ll finally be free of lugging around a laptop. Just think about that.

Connectivity

The phone will have the latest connectivity options built into its communications chips.

5G-New Radio (5G-NR) is slated to replace 4G. 4G-LTE was introduced in 2009 and it took a few years for the infrastructure to become mainstream. 5G is in its infancy now, and 900% improvement has been demonstrated by Qualcomm over existing 4G networks.

By 2021, we should see 5G become mainstream in mobile devices and commonplace in the 2025 phone, with upgrades over existing standards. The increased speed and bandwidth that enables 5G is the use of a broader spectrum of frequencies and multiple antenna arrays. The standard also allows device-to-device communication, allowing your phone to be the central hub or base station controlling all your other IoT gadgets in the vicinity. Major chipmakers Qualcomm, Intel and Huawei all announced their 5G modems this year.

As for Wi-Fi connectivity, the standard that is known as the IEEE 802.11ax, now referred to as Wi-Fi 6 that was just introduced this year, will be mainstream in our Phone 2025.

The new standard feature – Multiple-input multiple-output orthogonal frequency-division multiplexing (MIMO-OFDM) – allows bandwidth speeds five times faster than today’s fastest 802.11ac networks. Also, with CO-MIMO antenna arrays, users will experience even faster connectivity when there are several base-stations or routers nearby, as each data stream is broken up or provided by several routers. A very important speed upgrade when streaming 4K-Mixed-Reality (4K-MR) data streams is aptly shown in the Vimeo video in the next section.

With new bandwidth pipelines streaming directly to the future phone, users will demand ever greater instant high-quality content and information. Search, e-commerce and information avenues will grow ridiculously, with instant online demand from consumers. It is one of the reasons Google is paying a premium to have its search engine natively installed on Apple devices. The revenue line between the future phone and content/shopping services will blur and we could see major search engines and retailers putting resources into developing their own phones such as the Google Pixel 4 and Amazon’s foray into the smartphone market.

The motivation is simple, the future phone is the de facto portal to content, products, and services.

The Display

Screen technology has come a very long way since the last decade of smartphones, with pixel densities gradually increasing and pixel sizes slowly decreasing, with the first high-density pixel displays marketed by Apple, as a “retina-screen”, and Samsung’s Super AMOLED both exceeding 200-pixels per inch (ppi) then, and today to 458ppi on the iPhone 11 and 401ppi on the Samsung S10.

Our smartphones have captured all of our visual attention. Americans spend three to four hours a day looking at their phones, and about 11 hours a day looking at screens of any kind. Needless to say, the screen is still the main interactive surface with the Phone 2025, only that the technologies used to build the screen are going to be amazingly different. Today’s screens are typically built on AMOLED, IPS-LCD or OLEDs, but upcoming technologies such as a microLED (mLED) are in the works.

I think that QLEDs (quantum-dot) LEDs will become a mature mobile screen technology capable of giving us the chromatic vibrance consumers demand. Quantum-dot displays are not a new technology and are staples of flagship television products today, with manufacturers touting the advantages of QLEDs compared to OLED TVs. A nice comparison is described here. However, QLEDs are still nascent and there is massive commercial push to advance this technology.

I’m just going to call it what it is, the future phone will sport a QLED 4K ultra-resolution screen likely based on electroluminescent quantum dots (ELQD) and… it will be transparent. Why do we need a transparent QLED screen?

We can now hide the front cameras and sensors behind the screen. Since the nano-pixels of the QLED screen are so small, tiny holes or gaps can be created between the light-emitting pixels to allow light through the screen. Looks like Oppo has already unveiled this cool feature!

No more notches or front-facing sensors taking up precious screen area. Just one big gorgeous edge-to-edge QLED screen.

Another cool feature of Phone 2025 is the use of smart nano-optics to create a depth perception, allowing the screen to produce a 3D in-depth effect, some sort of holographic screen viewing experience, this capability is important when we use the phone in eXtended Reality (XR) applications.

This method is known as Visual Aberration Correction utilizing Computational light field displays, this technology pre-distorts the graphics for an observer, so that the target image is perceived without the need for corrective lenses.

This new screen is transparent to cameras and biometric sensors behind the screen and allows depth/dioptre correction so that the display adjusts according to the distance that your eyes are away from the screen. If the screen is close to the user’s eyes, it blurs or sharpens reciprocally avoiding the need for corrective optics in XR headsets.

The Camera

With such a powerful brain in my future phone, we need just as powerful sensory inputs. Humans are arguably blessed with the best eyes in nature, but other animals do have their own vision advantages when compared to humans.

For example, cats see better at night, horses have a very large field of vision (350° vs human’s puny 180°), and birds of prey have incredible long-range telescopic vision. Many animals have tetrachromic vision such as the mantis shrimp which can see into UV spectrum and a greater spectrum of light than humans!

So the trend of having multiple cameras started out once again from Apple, with the introduction of the iPhone X’s dual camera, allowing for different lens elements (wide or telephoto). Manufacturers quickly caught onto the advantages of having more than one camera module and soon we had triple (Huawei’s P20 Pro & the Apple iPhone 11 Pro), quad (Samsung Galaxy A9) and even five cameras (Nokia PureView 9).

The main difference between the cameras are the different focal lengths. The shorter the focal length, the wider the angle of view and vice versa. It’s almost like carrying a full set of lenses in your pocket.

Ok so we’ve got some wide-angle shots and some nice zoomed-in shots. So what? What can we do with our two eyeballs that our phone’s camera array will allow us to do better?

It’s simple physics. More cameras mean the phone can capture more light. Meaning impressive low-light vision and photography, a feature available in Huawei’s P30, Google’s Pixel 3 and Apple’s iPhone 11 Pro. I’m talking about Night-Vision.

So, the Phone 2025 will have an optically stabilized quad camera element array with the following camera capabilities:

- Telephoto Zoom

In 2007, I thought a liquid-zoom lens would be a cool feature to allow for optical-zoom. After all, you still need actual physical distance to focus light from a distance to the sensor. Then Oppo and Huawei both offered phones with embedded lens elements in a periscopic manner within the camera body. That works too, let’s have two in the future phone. - Macro Mode (microscope)

With up to 1cm focal distance from the ultra-wide, ultra-high-resolution main camera, loss of resolution to achieve macro-distances - Night Vision mode in real-time

First, each sensor combines four pixels into one, and then we have light being captured on all four sensors simultaneously to create a true low-light camera, something popular low-light camcorders are known for. An infrared matrix illuminator beside the camera will help illuminate pitch-black conditions. - True-3D videos

A quad camera setup will provide stereoscopic vision and depth-differentiated videos. Because there are now always at least two stereoscopic cameras capturing footage with distance information capture, Phone 2025 essentially becomes a 3D video camera capturing 3D volumetric videos and data. Capture 4K 120 frames per second (fps) 3D-video on this slick future phone. - Super Resolution photos

Super Resolution is a technique that combines all the pixels from the different elements to form one ultra-large resolution photo. The technique differs slightly from night-vision mode where all the pixels are layered on top of one another to create a brighter image. Super Resolution photos combine each pixel side by side to make a larger image. There are commercial cameras using this technique – the Light L16 Camera from Light.co contains 16 camera modules – five 28mm ƒ/2.0, five x 70mm ƒ/2.0, and six 150mm ƒ/2.4 lenses giving a combined resolution of up to 52 million+ pixels! In fact LG has filed a patent for a 16-camera module phone. SIXTEEN.

You don’t need that many. - Ultra-Slow-Motion Capture

Fast, sensitive cameras plus a crazy powerful processor equal ultra-slow-mo videos. Sony’s Xperia XZ3 can do 960fps at 1080p. I’d reckon Phone 2025 can do 4000fps at 1080p, no sweat. But the higher the fps, the smaller the resolution. Hey, you can’t have everything. - 360° 3D videos

Something I would like to see integrated into Phone 2025 is a 360° camera. How cool is that? Today’s 360° cameras, such as the Insta360 and GoPro Fusion, already produce jaw-dropping video features such as “over capture”. Because the camera is capturing 360 traditional frames can be captured from the spherical 360° video footage taken from a single camera point – giving the illusion of a panning camera with a moving subject, ‘bullet-time’ effect so on and so forth.

It’s like many cameras capturing the action all at once. This dream phone would be able to capture simultaneous video from the front and rear camera sensors. With four video streams, two from the front and two from the rear cameras, the AI-NPU stitches it all together.

XR eXtended Reality

Phone 2025 has a powerful processor and powerful “eyes” What else would be cool? Something like Tony Stark’s phone in the movie Iron Man 2. Rather, a mixed-reality with AI-machine vision that will enable one to mark out or pull data spatially from the environment.

Today, this is known as a technology-mediated experience that combines virtual and real-world environments and realities, often referred to as Augmented Reality (AR) or Mixed Reality (MR), where some aspect of the real world can be seen, like Microsoft’s HoloLens; or Virtual Reality (VR) like the VIVE system, where the user sees a video feed instead of the actual environment.

The ‘X’ in eXtended is a placeholder for virtual reality V(R), augmented reality A(R) or mixed reality M(R), and XR is can be used to casually group technologies such as VR, AR and MR together. In a nutshell, XR allows us to augment digital objects or information on top of reality, or, conversely, see physical objects as present in a digital scene. There have been many attempts such as the Ghost, and even this cool hyper-reality video concept done by Keiichi Matsuda and another concept by Unity.

Ok so what can we do with XR?

Simple stuff we can do today involves real-time translation: Google’s Translate app translates multiple languages, Photomath solves any math problem you take a picture of and Google maps helps you navigate in an urban environment.

When app stores for the smartphone were introduced, they paved the way for an industry of applications and businesses with promises of XR-enabled technologies that would revolutionize the way we interact with our future smartphones.

Things start to get interesting from here on, we can now take a selfie video of ourselves in real time and super-impose that in a virtual environment, and we can increase the size of a physical environment in a virtual environment. Video and audio transmogrification are now real-time too,with speech and video that can now be voiced over or videoed over the actual video. Remember the scene from the movie Mission: Impossible III, where the hero changed his voice using a voice-changing device? Audio-shopping is now possible, and so is video-shopping. With XR, we could have real-time video conversations with a foreign counterpart speaking another language. XR is going to be an incredible productivity changer.

Human Machine Interface Gesture Control

How are you going to control your fancy MR headset? With XR and a computing desktop environment enabled smartphone chances are, we could end up interacting with what’s called a Natural User Interface (NUI).

NUI lets users interact with a device with a minimum learning curve – an example is a touch-less gesture control which allows manipulating virtual objects in a way like physical ones. It removes the dependency on mechanical devices like a keyboard or mouse. Different approaches for gesture control include Radar-type sensors from Google, Leap Motion’s hand tracking system, and the Bixi hand-gesture system, just to name a few.

Despite the options available, the challenge has been miniaturization, the sensor would have been placed underneath the QLED screen and could either be an optical sensor or just plain old dual-cameras and machine-vision in action and that’s not difficult to implement in a mobile device.

The truth is, having an NUI reduces the learning curve of new applications and is critical in XR applications, where the ability to emulate holding or interacting with a virtual object will greatly increase usability of our future phone on many productivity fronts.

Fancy a future with people waving and gesticulating at their phones, that’s

body language indeed.

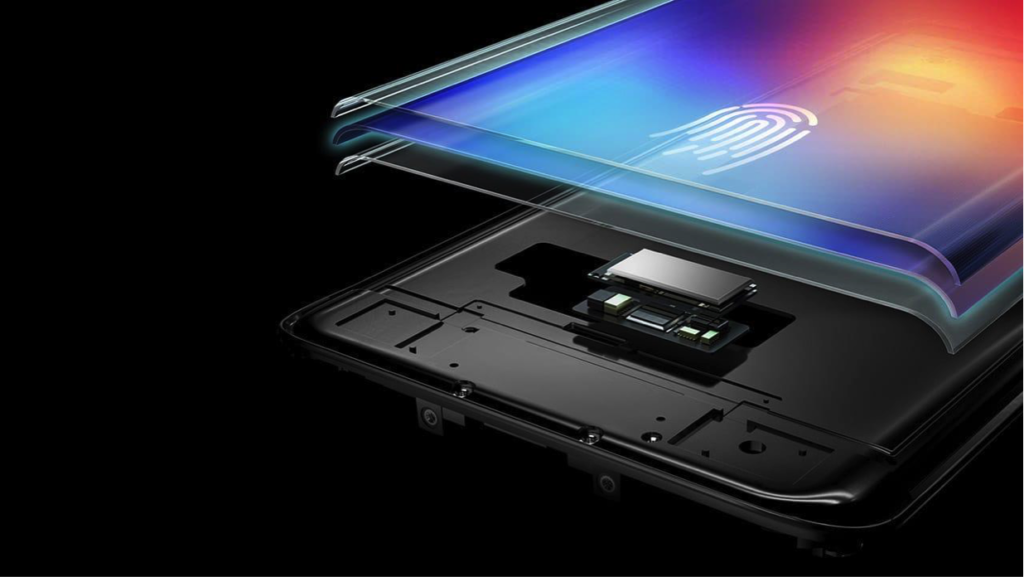

Hybrid Biometrics and Security

The Phone 2025 represents your entire digital life, and with that, we will need upgraded security. Since the first fingerprint sensor on the iPhone5S, there have been some exciting developments in this aspect, such as facial and iris-recognition on 2017 flagship smartphones such as the iPhone X and Samsung Galaxy S9.

But how do designers pursue better screen-to-bezel ratios without sacrificing fingerprint sensor footprints? This year, several manufacturers introduced a dozen phone models with under-display fingerprint sensors, such as the Vivo X21, Oppo R17, Huawei Mate P30 Pro, Samsung Galaxy S10, Honor 20 Pro and OnePlus 6T provided by manufacturers from Qualcomm, General Interface Solution (GIS), O-film Tech, Fingerprints and Goodix.

However, we’ve seen that fingerprint and facial recognition security methods can be spoofed or defeated. How can we create a more secure device without sacrificing screen real estate? I dub the next generation of biometrics in the future phone as Multi-factor authentication (MFA), using no less than five biometric factors at pseudo-random intervals. Full-display fingerprint scanner, facial-recognition, capacitive fingerprinting, blood-flow thermography are technologies that come to mind.

The entire QLED screen would authenticate each finger-press as we tap anywhere on the screen, something Apple patented in April 2019, and I envision the future phone to have thermogram sensors to capture heat information as you use the device. 3D face printouts or fingerprint hacks won’t work anymore, as the person using the phone must be a live human being.

Currently, the world’s smallest thermal camera is the Lepton from FLIR, which is available here and here, but at $350, it’s an expensive component to put into a phone. This is where a lower-cost component such as Panasonic’s thermo-graphic matrix sensor, known as the GRID-eye AMG8833, could be used.

The future phone will have at least three biometrics, in-screen fingerprint authenticator

checking every time you type on the screen, and a thermal-augmented facial

recognition scan. This MFA approach gives confidence that only the owner

can access his very expensive, high-tech piece of gear.

Imagine using your phone to unlock your work monitor. There won’t be nosy co-workers trying to guess your password or spoof your fingerprint reader. There’s nothing to break into if the device isn’t even there.

Phone 2025 Vision

Looks like I’m going to wait out the next few phone releases till Phone 2025 is released!

Respect to post author, some wonderful information . Rory Padriac Deborah

Very neat blog article. Really thank you! Fantastic. Hermia Briggs Chem